MuseMeUp captures your emotional state in real time and generates a unique, personalized music composition — shaped by how you feel and what you explore.

Discover More Try the AppAn innovative fusion of emotion recognition, physiological sensing, semantic knowledge, and AI music generation.

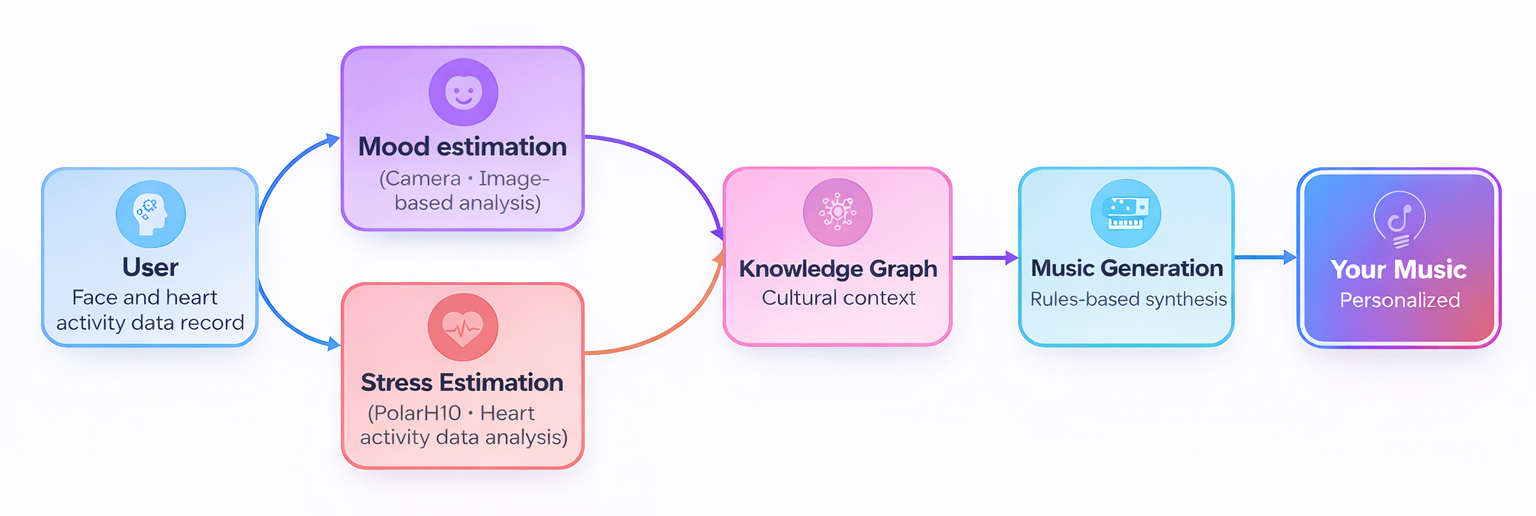

MuseMeUp is an innovative application that captures your emotional state using emotion recognition analysis and heart activity data. These signals are processed in real-time by AI models and enriched with cultural semantics through a Knowledge Graph. The result is a unique music composition, tailored to your experience.

Developed as part of the MuseIT project, whose mission was to create multi-sensory, inclusive, and user-centered cultural experiences through innovative interactive technologies. Originally designed for museums and cultural institutions, its potential goes far beyond — any setting where emotion and context matter can benefit from this fusion of technology, art, and human experience.

Four intelligent modules work in concert — from sensing your emotions to synthesizing your personalized soundtrack.

While you explore a cultural artifact, facial expressions and ECG are analyzed in real time. Results are enriched by the Knowledge Graph and fed into Music Generation — producing a unique piece tailored to you.

Analyzes facial expressions via webcam in real time using a Convolutional Neural Network trained on a custom dataset. The model continuously detects your emotional state throughout your experience.

Assesses stress levels via heart activity data recorded through a chest strap device (Polar H10). The stress detection component is powered by a Convolutional Neural Network, trained using a self-supervised learning approach.

A Knowledge Graph stores structured artifact metadata — creator, time period, keywords, themes — built with Protege, GraphDB, and Catalink's CASPAR, queried in real time via SPARQL.

Music theory rules synthesize a personalized piece. Mood shapes tonality (major/minor key), while cultural context shapes instruments — from ancient lyre to modern electric guitars.

Here's how everything comes together in one seamless session.

You begin exploring — a painting, a historical map, a traditional garment. Your session starts.

Facial expressions and heart activity data are recorded. AI models analyze your mood and stress response continuously.

The Cultural Heritage Knowledge Graph adds historical period, themes, and style metadata about what you're exploring.

All inputs feed the Music Generation engine. A unique piece is created, played, and available to download.

MuseMeUp was built for the MuseIT project — a European-funded initiative focused on multi-sensory, inclusive, and user-centered cultural experiences. While a user explores a cultural artifact, their facial expressions are captured by a webcam and their heart activity is recorded through a Polar H10 chest strap. Both data streams are analyzed simultaneously by dedicated AI models in real time.

First, login to the MuseIT website using your credentials. Then, select the option 'Explore Artefacts'. This page includes a collection of cultural artifacts, from paintings, to historical maps and traditional attires. You can navigate through the collection by scrolling through the available pages, and filter the displayed artifacts using the search bar. To use MuseMeUp, click on the green camera button, located next to the filters search bar. This triggers the mood estimation app, which captures your facial expressions and detects your emotion. While the camera is recording, you can explore the collection by clicking on the artifacts of your interest. When you're done, click the red 'Stop' button to stop the recording. After a few seconds, a music piece personalized on your experience will be generated and available for download — simply click on the blue 'Download' button.

Try the MuseIT Platform

A few seconds after stopping, your music piece is generated and made available. The blue Download button appears near the filter bar — click it to save your unique composition. The music is entirely shaped by your session: happy expressions while exploring a Renaissance painting produces an uplifting major-key melody with period-appropriate instruments.

MuseMeUp was piloted in dedicated workshops as part of the MuseIT project. Visitors interacted with the application, through the MuseIT platform, during online browsing of cultural artifacts. Meanwhile, the system captured and analyzed their emotional responses. At the same time, the model decisions were fed into the Cultural Heritage Knowledge Graph, that combined them with related cultural context. The results were sent to the Music Generation component that finally produced a personalized piece of music.

The setup is minimal — two pieces of hardware are all it takes for the full MuseMeUp experience.

With a built-in or external webcam for facial expression capture and mood estimation.

Wearable ECG sensor for real-time heart activity monitoring and stress level assessment.

Required to access the MuseIT platform and retrieve Knowledge Graph context in real time.

MuseMeUp creates value for both visitors and the institutions that host them.

Dive deeper into the technology and research behind MuseMeUp.

The full story of how MuseMeUp was built, how it works, and what it means for cultural experiences.

A deep dive into the ontology design, GraphDB integration, and CASPAR-powered data pipeline.

How a recommendation system was built on top of the Cultural Heritage Knowledge Graph.

The backbone tool that powers the Knowledge Graph population in MuseMeUp.

The engineers and researchers who built MuseMeUp.

Get in touch with our team to arrange a full live demo — including ECG capture, real-time emotion analysis, and personalized music generation.